Codegolf motivation / process

Contents

This article is part of my series of

article about my tiny graphics

programs.

How i started : the

demoscene

It all started with the demoscene which i

know of since 2004 (probably from PureBasic french

board, one of the first programming language i learnt), PureBasic

had some Amiga / Atari enthusiasts which were sharing sources of

80s/90s demo

effects (an offshoot of the scene btw and most of these

programs are not archived, they are part of the scene to my

opinion...) which is where i discovered the demoscene, i was mostly

impressed by 64K

programs as a teenager, most Farbraush

productions, Conspiracy

productions, Heaven Seven, Rise, TPOLM, Calodox, Titan and

Bypass

productions, 64K programs and less had a strong influence at

the time as i was obsessed for a time with the use of code

generated graphics in my small PureBasic arcade games, i was

also impressed by demos on older platforms such as TBL, Future Crew,

Sector

One, Checkpoint,

Holocaust,

Equinox,

TCB demos

(mainly Atari ST because i bought an Atari STF at the time !) and

other tiny prods such as Cdak (4Kb) or

tube (256

bytes), also demos from groups such as ASD or MFX ones later

on.

One of my first demo was done as a teenager with Pure Basic in

June 2005, it had

ripped tracks, it was never released and i just shared it with

friends, it was probably my best effort to date of a full demo...

:)

I was more of a regular follower / consumer although i always

wished to participate, i then did several effects / 3D engine

experiments (some of which on GP2X) but got bored very

quickly with them, turns out doing demos require a lot of heavily

focused interdisciplinary work (unless done as collaborative

works), i still released a 1K intro POC in

2008 as a baby step which was the result of tinkering with some

minimal OpenGL code and MIDI on Windows.

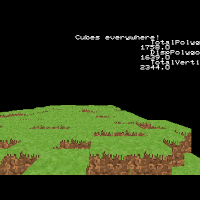

some 3D engine effects ~2012

I then had a short programming period on 8 bits systems

notably the Sega Master System (z80 assembly), i did a game + a

prototype and experimented with several effects for this platform

(raster interrupt effects, colors cycling, chunky stuff) but mostly

unreleased / prototypes, i never owned the system but i was

attracted by its overall design which seemed more elegant (and

easier) than the NES

which i owned, i quite like the Master System VDP and the link with

MSX computers

architecture.

Also had a Sega

Saturn period in which i learned some new quirks (first foray

with RISC,

Hitachi SH-2,

delay slot,

quads rendering...) with the goal of doing some demos or games, did

some experiments in assembly / C but the complexity of the hardware

and all its possibilities was overwhelming, this was probably a

'maximalism' period mixed with nostalgia where i thought this kind

of hardware mess was cool.

I was still interested for a long time afterward by the

quadrilaterals rendering of the Sega Saturn (also used by the

Nvidia NV1 and in

some other form by the Panasonic

3DO) which was pretty cool and a logical choice after sprite

hardware albeit unpractical for the sort of 3D of that era, i

attempted several times to replicate the algorithm only to be

really successful later on after joining the ideas of a

floor-casting prototype and a tiny integers only 3D cube software

renderer, the result is a simple but tiny

forward textures mapper / quads renderer.

some Sega Saturn 3D stuff ~ mid 2008 / 2009 and

perhaps later

How i started : codegolf

Around ~2008 i started being focused on 1K and 256 bytes and

smaller graphics programs, these extremely small binaries felt like

magic.

What is the appeal about very small graphics programs ? They

are a bit arcane at first so intriguing from a technical point of

view and generally focus on a single effect so they are quite

manageable to do by a single person with scattered focus, they are

also a fun exercise to discover platforms, another appeal to me is

that they often use "forgotten" display hacks and most productions

deploy these tricks within a completely barren graphics environment

close to the hardware similar to the early days of computing of

which i have a special interest.

The constrain imposed by code golfing is also a fun and

educative way to explore / understand algorithms by playing around

their core concept in a creative way without being too

scattered.

Around ~2018 i started doing regular computer graphics

experiments of all kinds in a Processing

environment, i realized at some point that some of my experiments

were very small algorithmically and could perhaps fit in 256 bytes

or less.

Then i started by looking at the possibility of doing small

programs on Linux to prove my point and tried to port some of my

earlier experiments, first in C then with x86 / x64 assembly, doing

it in C was because i was more accustomed to it and because i took

it as a challenge to do something tiny and interesting in C on

Linux.

I released my first 512 bytes in 2020, i wanted it to be 256

bytes but the compiler output kinda told me that it was not

possible without going full assembly, this idea didn't satisfy me

at that point so i just released it as a 512 bytes followed by my

first 256 bytes later on with a small C

framework i was iterating on at the same time, i then tinkered

with assembly which allowed to port some more experiments.

Although the demoscene is a very competitive subculture i was

never interested in the competitive aspect (sparingly accept

challenges though !), i am more attracted to its creative content;

the creativity / trickery peoples deploy under constraints with the

pleasing visuals that feels like magic and sometimes by the overall

aspect of some productions such as Live Evil with a

combination of visuals and sounds that feels like psychedelics to

my sensors (note : i don't use any !), i also love the puzzle

aspect of optimizing programs for size and discovering forgotten /

new tricks.

There is also something interesting to me with small programs

in term of elegance, low energy expenditure (efficiency) and

easiness to share and replicate the contained information.

Real-time vs procedural graphics

Many of my programs fall into the "procedural graphics"

category of the demoscene, generating non-animated visuals. This is

partly due to personal preference and partly because I find much

greater creative freedom in the algorithms i can use, even though

it might initially seem less exciting to viewers.

Procedural graphics programs seems to be a "recent" category

for the demoscene as it show that in early 2000

most if not all of the demoscene releases were programs with

animated graphics, procedural graphics programs came out slowly

afterward (graphics compo always existed in parties though but this

was not just independent programs), it is rather funny to see all

the peoples confused by the lack of animation at these time because

they expected what the demoscene 'originated from' and improved

upon (cracktro

then independent impressive "in your face" animated programs), i

personally find that procedural graphics close the time loop as

cracktro

began as non animated programs in early 80s just showing some

logo and few other elements, there is also other non animated

programs art stuff at this period perhaps motivated by BASIC. This

is also valid for elements such as gfx etc. that are not

necessarily embedded in programs but are released as part of the

scene, they are of the same importance.

My "theory" on the rise of procedural graphics is that it has

to do with the rise of tiny programs (especially 4k and

less where non real-time is perhaps easier at first) and much

less relevance on the optimization part of real-time demos (due to

all the compute ?) so code peoples started to find other challenges

and tiny programs had a challenge in term of binary size, the

programmable graphics pipeline may also be relevant in the rise of

procedural graphics.

About NFTs

Parts of my original motivation could also be explained as a

reaction to the NFT market

(and bits of AI later on), there was several attempts to gain quick

money in ~2021 from the experiments i did... i am not opposed to

NFTs per se if it allow individuals a different way to monetize

their art but i dislike its market dynamics and the fictive value

(commodification) the NFT market associate to digital art.

About AI

Not opposed to AI at all, i see huge potential mainly because

the huge data processing can be seen as an efficient compression of

our collective intelligence with the ability to poke around it, i

remain neutral however due to potential future concerns, it has

high requirements (computational, storage) unavailable to me and

thus doesn't interest me beyond curiosity, it is also bad at

low-level graphics code golfing and i am fairly certain it will

still struggle with this for some time.

Rules

Ok so there is some self imposed rules that i try to roughly

follow for my code golfing stuff (it align to my interests) :

- i only do graphics code golfing; tiny code that will produce something to the screen (static or animated) with small code

- tend to not use APIs much (with the exception of mandatory stuff) and prefer software rendering / do everything as close to the hardware as possible

- i prefer assembly or languages that allow close to the hardware

code / executable such as C (not much interested in high level

languages code golf or VM stuff with the exception of fantasy

computers), the goal is optimizing for binary size instead of

optimizing for source code size, this distinction leads to different

result

Process

My code golfing process starts by prototyping graphics

experiments in a high level environment first such as p5js (i also port it to C sometimes),

trying to make something i am satisfied with, once i have the base

algorithm i tend to tweak the code (parameters etc.) blindly

without too much thinking and just seeing the end result, i find it

relaxing, interesting stuff may emerge sometimes and with enough

tinkering i roughly know what works and what is going on on the

screen so i can direct the process. Also tend to roughly guess the

size of the binary once it will be optimized, this also help the

direction.

There is also some intros where i had a rough idea of what i

wanted to do, most ideas come from other peoples (design,

algorithm etc.), i also use the Twitter platform heavily since

there is many interesting stuff coming from other art scenes such

as generative ones. (Processing etc.)

Once i have a working code i am satisfied with i tidy the code

and optimize it as much as i can with a focus on the target

platform : reducing expressions, simplifying, using bit-wise

operations, doing structural change etc. there is several tools

that can help such as Wolfram Alpha ("find formula

for sequence x,y,z,w..." for example) or Godbolt or even AI powered

tools.

The low level optimization phase sometimes require multiple

tries to get something that may be worth and in some rare cases i

may have to cut features, if i do there is a high probability that

i will ditch the code momentarily to think about it later and

accumulate new tricks or ideas.

I sorta heavily use the Minsky circle algorithm to

draw interesting stuff (approximation for sin/cos but not only...),

i tweaked the parameters for a long time so i have a big pool of

sketches that i can start with, most of them shrinkable to 256

bytes i believe.

My advice for someone who want to start is to prototype a lot,

approach different ideas (fractals, affine transforms etc.) or

rendering methods and try to shrink them and make some sort of tiny

tricks bag that you can peek from, deciphering other programs is

also a quick way to gain skills and old sources such as SIGGRAPH papers can

lead to ideas, although not my first choice you can also go social,

going to parties or

looking for online resources or

community Discord.

My platforms of choice

TIC-80

What i like about the TIC-80

platform is the severe graphics restrictions in term of

resolution and colors but associated with modern languages

(JavaScript, LUA etc.) and easy graphics API, it is a very

sympathetic framework to code for without hassle and with graphics

restrictions similar to early 8 bits computers without the

oddities. I'd actually love a simple similar hardware platform with

60s / 70s / 80s tech but without the oddities of all the existing

legacy hardware (= way more restricted but using modern ideas), i'd

like easy repairability as well or one which could be built or

hacked from scratch perhaps even from salvaging or old tech such as

magnetic-core

memory.

RISC OS / Acorn hardware

RISC OS / Acorn platforms is my go-to for late 80s up to now

graphics code golfing, i love programming for the early Acorn

hardware (mainly due to the early ARM CPU / RISC architecture) and

the programs may still works nowadays on modern hardware based on

ARM CPU such as Raspberry PI...

The only issue with code golfing on this platform is that

early ARM has bigger code density than x86 mainly due to 4 bytes

cost per instruction but it is also what makes it cool and there is

workarounds (CodePressor),

there is also some oddities as well such as aligned

writes but it also has many advantages due to its RISC nature.

It is a great alternative to the commonly used DOS platform for

demoscene codegolf, DOS always has an edge though due to its very

short graphics setup with old modes such as mode 13h whereas the

RISC OS setup would take about as much bytes as x86 + Linux fbdev

platform unless some shortcuts is taken. (staying at desktop

resolution, no double buffering, no vsync etc)

Platforms with RISC CPUs or related may also be of high

interest to me !

Linux

Linux / x86 is my main system since ~2010 and it is my go-to

for modern graphics code golfing.

A fairly complete guide for code golfing on Linux is available

here.

On Linux i am writing programs in x86 assembly

language nowadays with a custom ELF header

and i am using the Linux framebuffer

device (fbdev) which still works on modern Linux distributions

(it has generic support since ~1997 in the kernel), the API is

quite minimal and require two syscall to be able to output some

pixels on the screen so the end setup is fairly small and it works

for high resolutions and color depth.

I tend to use SIMD

instructions which helped on saturation

arithmetic early on, nowadays i also use it to pack multiple

operations as a single instruction or for floating-point

arithmetic, this has a slight impact on the programs

compatibility.

Linux productions still have high binary size cost (even more

so if you output sounds) compared to some platforms such as DOS due

to the ELF

header (too bad that A.out was flushed out

from the kernel in ~1995, it is

tinyer than ELF) so if you takes into account the framebuffer

API and the header you already have about 70 bytes taken out of

your binary which is already more than 1/4 of a 256 bytes target,

you also have to handle packed RGBA format if you go for modern

color depth.

Due to the ELF header targeting DOS may be better sometimes

when these 70 bytes are needed (COM file has about no headers and

graphics setup is small), there is not much differences with x86

Linux although it is a bit trickier with all the available display

modes and compatibility issues, using AVX instructions may also

require additional setup code but going for common low resolution

modes and SSE works out of the box, high resolution on DOS (VESA)

can be tricky with a lot of compatibility issues as well but it

can be done with

~20 bytes setup with some shortcuts.

back to top